2020 has been a breakthrough year for artificial intelligence, specifically the branch of AI known as machine learning and neural networks, and its already causing widespread disruption in almost every industry, including within creative and artistic expressions previously thought to be out of bounds for machines.

One of 2020’s many AI breakthroughs has been OpenAI’s jukebox project – a neural net that generates music, including singing, with composition and orchestration in a variety of genres and artistic styles. The neural net is self-taught, meaning it starts out completely blank with no musical skills at all. Then it’s connected to a huge data source, like Spotify or the iTunes music store, and it starts to evolve its understanding of music as performed by the various artist. When the network is sufficiently trained it will be able to produce a brand new composition in the style of an artist or as a mashup of several artist. One minute of music takes roughly 9 hours to compose and perform.

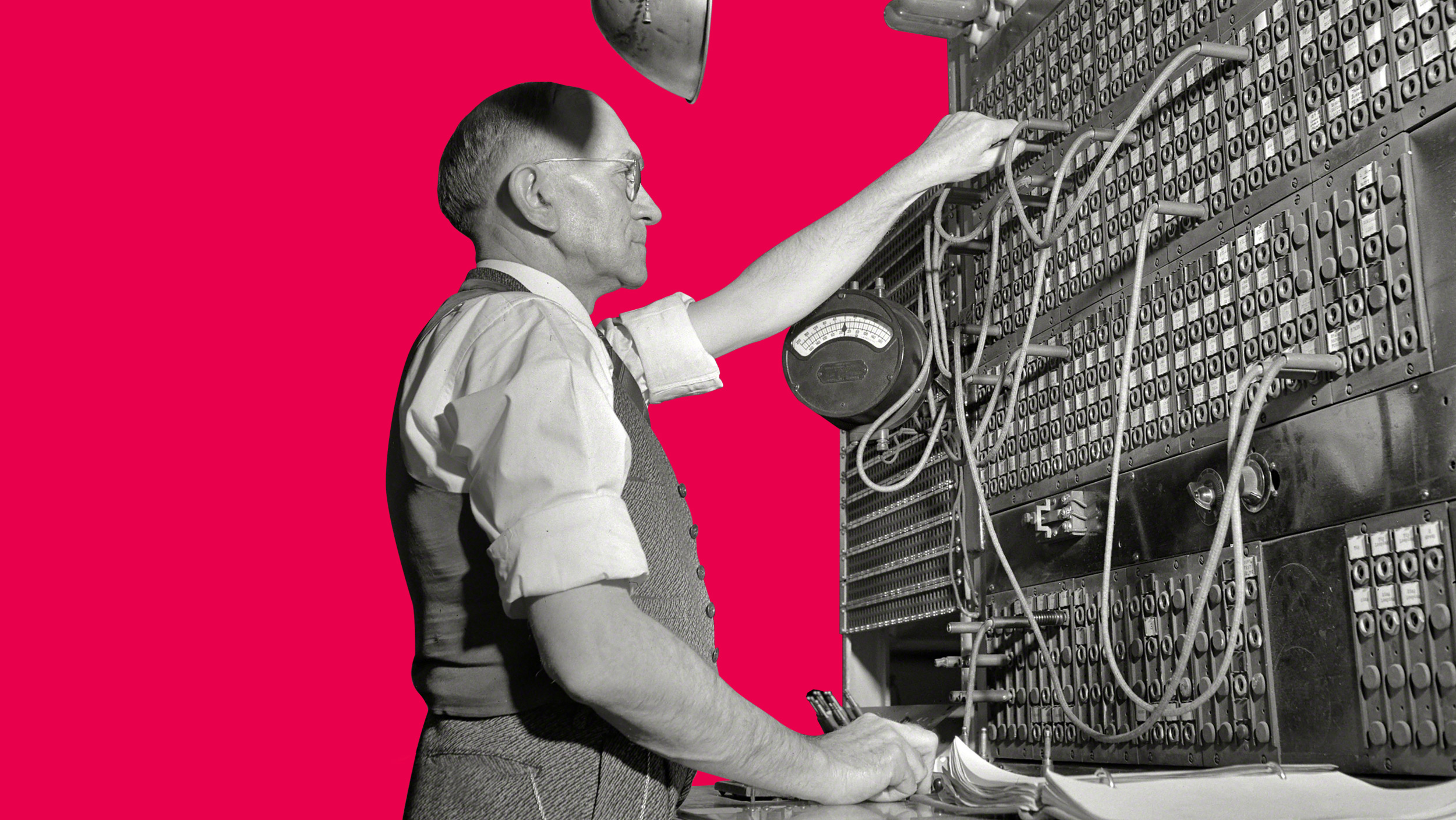

The idea of music generating machines can be traced back many centuries. We have all seen the automated piano playing in old western movies, and there are some absolutely stunning creations to be found in museums, like a six-piece string orchestra with both organ and percussion. But for all of these mechanical marvels the music was pre-encoded, often as spikes onto a rotating cylinder, indicating timing, pitch, velocity, and what instrument of each note to be played.

New technologies create new opportunitites

At Glitch Studios we go where new technology leads us, to explore new opportunities for our client’s brands to creatively express themselves.

Cultivating Minds: How Havrå Farm Game will Grow Sustainable Leaders

Cultivating Minds: How Havrå Farm Game will Grow Sustainable Leaders How cultural heritage is leveraging blockchain and NFTs to reconnect to their audience

How cultural heritage is leveraging blockchain and NFTs to reconnect to their audience It’s hot tub time

It’s hot tub time The renaissance of the classic arcade

The renaissance of the classic arcade A model for understanding the virtual reality experience

A model for understanding the virtual reality experience How VR / AR are an effective b2b sales solution

How VR / AR are an effective b2b sales solution VR – Machine driven human to human interaction

VR – Machine driven human to human interaction Untangling the wires – how 2019 will see virtual reality cut the umbilical-cord

Untangling the wires – how 2019 will see virtual reality cut the umbilical-cord Our long road to getting virtual reality on the road

Our long road to getting virtual reality on the road The information age is dead welcome to the experimental age

The information age is dead welcome to the experimental age Anatomy of VR

Anatomy of VR Deciphering the hololens

Deciphering the hololens Make it sound right or break immersion

Make it sound right or break immersion Branding your reality

Branding your reality A deep dive into immersion

A deep dive into immersion Why the suit & ties don’t get VR

Why the suit & ties don’t get VR The hidden marketing value of virtual reality

The hidden marketing value of virtual reality Theatre reimagined with AR

Theatre reimagined with AR Let your audience control their experience

Let your audience control their experience Immersive web to CRM integration for lead gen

Immersive web to CRM integration for lead gen Digital interactive exhibitions during the pandemic

Digital interactive exhibitions during the pandemic Creating a virtual museum with photogrammetry

Creating a virtual museum with photogrammetry Sailing across the pacific in VR

Sailing across the pacific in VR Audience engagement through immersive web

Audience engagement through immersive web Share your architectural vision in AR

Share your architectural vision in AR Selling complex installations across WebGL and VR

Selling complex installations across WebGL and VR Bringing history to life with 360 photospheres

Bringing history to life with 360 photospheres Digitizing hard to exhibit historical artifacts

Digitizing hard to exhibit historical artifacts Creating an immersive product showroom

Creating an immersive product showroom Creating interactive 360 heritage site tours for web

Creating interactive 360 heritage site tours for web